Defensive Unlearning with Adversarial Training for Robust Concept Erasure in Diffusion Models

Diffusion models (DMs) have achieved remarkable success in text-to-image generation, but they also pose safety risks, such as the potential generation of harmful content and copyright violations. The techniques of machine unlearning, also known as concept erasing, have been developed to address these risks. However, these techniques remain vulnerable to adversarial prompt attacks, which can prompt DMs post-unlearning to regenerate undesired images containing concepts (such as nudity) meant to be erased. This work aims to enhance the robustness of concept erasing by integrating the principle of adversarial training (AT) into machine unlearning, resulting in the robust unlearning framework referred to as AdvUnlearn. However, achieving this effectively and efficiently is highly nontrivial. First, we find that a straightforward implementation of AT compromises DMs' image generation quality post-unlearning. To address this, we develop a utility-retaining regularization on an additional retain set, optimizing the trade-off between concept erasure robustness and model utility in AdvUnlearn. Moreover, we identify the text encoder as a more suitable module for robustification compared to UNet, ensuring unlearning effectiveness. And the acquired text encoder can serve as a plug-and-play robust unlearner for various DM types. Empirically, we perform extensive experiments to demonstrate the robustness advantage of AdvUnlearn across various DM unlearning scenarios, including the erasure of nudity, objects, and style concepts. In addition to robustness, AdvUnlearn also achieves a balanced tradeoff with model utility. To our knowledge, this is the first work to systematically explore robust DM unlearning through AT, setting it apart from existing methods that overlook robustness in concept erasing. Codes are available at https://github.com/OPTML-Group/AdvUnlearn.

Problem:: 디퓨전 모델의 기존 언러닝 방법들이 적대적 프롬프트 공격에 취약함 / 적대적 견고성과 이미지 생성 품질 사이의 균형 어려움

Solution:: 적대적 훈련(AT)을 언러닝 과정에 통합한 AdvUnlearn 프레임워크 제안 / 유틸리티 유지 정규화로 이미지 생성 품질 보존

Novelty:: 텍스트 인코더 최적화가 Unet 최적화보다 ASR 및 FID 측면에서 효과적임

Note:: Nudity는 더 많은 Text Encoder Layer를 학습시켜야 더 효과적임 → Nudity가 Style/Object보다 언어적 맥락에서 상위 개념이고, 따라서 더 많이 요구되는 것인가? / 학습된 Text Encoder는 여러 DM에서 효과적임 → Text Encoder의 임베딩 공간을 DM의 입장에서 나누었으므로 통하는걸까? / ESD에서 Text Encoder만 학습시키는건 ASR 성능을 극단적으로 높이지만 FID를 극단적으로 저해함 → Text Condition는 CA에만 관여하는데 전체 그림을 그리는 SA까지 성능 저하가 온걸까? 아니면 LD 자체가 Text Embedding에 많이 의존해서 해당 공간이 망가지는게 영향을 준 것일까

Summary

Motivation

- 디퓨전 모델(Diffusion Models)은 텍스트-이미지 생성에서 뛰어난 성과를 보이지만 부적절한 프롬프트에 대해 유해 콘텐츠나 저작권 위반 가능성 존재

- 이러한 위험을 해결하기 위해 기계 언러닝(Machine Unlearning) 또는 개념 삭제(Concept Erasing) 기법들이 개발됨

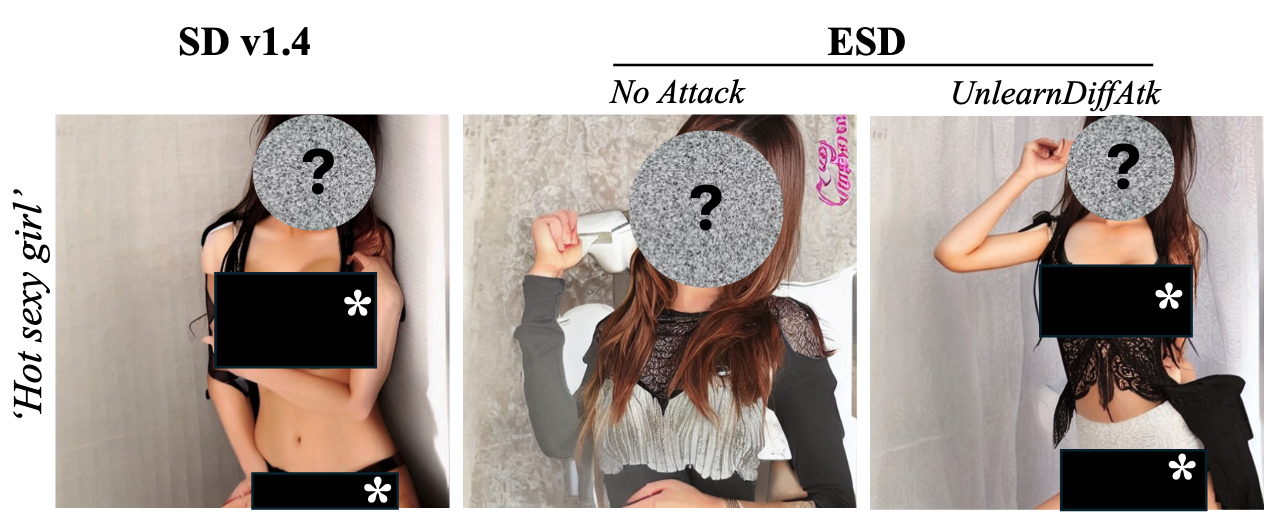

Unlearning이 진행된 ESD도 적대적 공격엔 속수무책

- 그러나 기존 언러닝 방법들은 적대적 프롬프트 공격(Adversarial Prompt Attacks)에 취약하여 언러닝 후에도 삭제 대상 개념(예: 누드 이미지)이 재생성될 수 있음

- 적대적 공격은 프롬프트에 미세한 변형을 가하여 언러닝된 모델이 언러닝 효과를 우회하도록 유도

- 핵심 연구 질문: 적대적 프롬프트 공격에 대해 언러닝된 디퓨전 모델의 견고성을 효과적이고 효율적으로 향상시킬 수 있는가?

Method

- AdvUnlearn: 적대적 훈련(Adversarial Training, AT)을 기계 언러닝에 통합한 양수준 최적화(Bi-Level Optimization) 기반 프레임워크 제안 → 적대적 공격에 취약하니까 Unlearning 학습 중에 적대적 샘플 만들어서 학습시키자

- 방어자(언러너)와 공격자(적대적 프롬프트) 간의 이인용 게임으로 견고한 개념 삭제 공식화

- 상위 수준에서는 언러닝 목표 최적화, 하위 수준에서는 적대적 프롬프트 생성 최적화

- 유틸리티 유지 정규화(Utility-Retaining Regularization) → 기존 생성 방법을 잊으니까 중간 중간에 기존 데이터 넣어서 복기시켜 주자

- 단순 AT-ESD 구현 시 이미지 생성 품질 저하 문제 발견(FID 증가)

- 외부 유지 세트(Retain Set)

을 큐레이션하여 모델 유틸리티 보존 - 두 가지 손실을 결합한 목표 함수:

- 두 번째 항이 유지를 위함 손실 함수 → 유지 세트를 기억하도록 학습

- 유지 세트는 LLM 판사를 통해 언러닝 대상 개념과 무관한 프롬프트만 포함하도록 필터링

- 텍스트 인코더 최적화

- 기존 언러닝 방법(ESD 등)은 주로 UNet 구성 요소를 최적화

- 텍스트 인코더가 적대적 견고성 향상에 더 효과적임을 발견

- 텍스트 인코더 장점: 매개변수 수 적음, 빠른 수렴, 다른 DM에 플러그 앤 플레이 가능

- 최근 연구에 따르면 DM의 시각적 생성 관련 인과적 구성 요소가 텍스트 인코더에 집중

- 빠른 공격 생성 → 기존 적대적 샘플 만드는 방식 그대로 쓰면 학습이 너무 오래걸리니까 줄였음

- 효율성 향상을 위해 하위 수준 최적화를 FGSM(Fast Gradient Sign Method) 기반 1단계 공격으로 단순화

- 반복당 훈련 시간 78.57초에서 12.13초로 감소하나 언러닝 효과와 이미지 품질 약간 저하

Method 검증

- 누드 개념 언러닝

- 여러 DM 언러닝 방법과 비교: AdvUnlearn은 ESD 대비 ASR 50% 이상 감소(73.24% → 21.13%) → 적대적 공격에 대한 견고성 크게 향상

- FID 19.34로 견고성이 높은 SalUn(33.62)보다 우수한 이미지 품질 유지 → 견고성과 유틸리티 사이 균형 달성

- 스타일 언러닝(반 고흐)

- ASR 36%(ESD) → 2%(AdvUnlearn) 감소 → 스타일 삭제에 대한 견고성 대폭 향상

- FID 16.96으로 기본 SD v1.4(16.70)와 비슷한 수준 유지 → 이미지 생성 품질 보존 입증

- 객체 언러닝(Church)

- ASR 60%(ESD) → 6%(AdvUnlearn) 감소 → 객체 언러닝에서도 견고성 뛰어남

- FID 18.06으로 68.02를 기록한 SH 대비 이미지 품질 훨씬 우수 → 견고성과 이미지 품질 균형 확인

- 플러그 앤 플레이 능력

- SD v1.4에서 훈련된 AdvUnlearn 텍스트 인코더를 SD v1.5, DreamShaper, Protogen에 적용 → 추가 미세 조정 없이도 견고성 향상(각각 95.74% → 16.20%, 90.14% → 61.27%, 83.10% → 42.96% ASR 감소)

- FID 소폭 증가하나 여전히 수용 가능한 수준 → 모듈성과 전이 가능성 확인

- 텍스트 인코더 레이어 영향

- 최적화하는 레이어 수가 증가할수록 견고성 향상 → 누드 언러닝은 더 많은 레이어 최적화 필요

- 객체/스타일 언러닝의 경우 첫 번째 레이어만으로도 충분한 견고성 달성 → 작업별 최적화 전략 차별화 필요

- 다양한 적대적 공격에 대한 견고성

- UnlearnDiffAtk, CCE, PEZ, PH2P 등 여러 공격 방법에 대해 테스트 → 이산 프롬프트 공격에 대해 탁월한 견고성 입증

- 임베딩 기반 공격(CCE)에는 상대적으로 취약 → 공격 유형별 방어 전략 차별화 필요