Relation DETR: Exploring Explicit Position Relation Prior for Object Detection

This paper presents a general scheme for enhancing the convergence and performance of DETR (DEtection TRansformer). We investigate the slow convergence problem in transformers from a new perspective, suggesting that it arises from the self-attention that introduces no structural bias over inputs. To address this issue, we explore incorporating position relation prior as attention bias to augment object detection, following the veri cation of its statistical signi cance using a proposed quantitative macroscopic correlation (MC) metric. Our approach, termed Relation-DETR, introduces an encoder to construct position relation embeddings for progressive attention re nement, which further extends the traditional streaming pipeline of DETR into a contrastive relation pipeline to address the con icts between non-duplicate predictions and positive supervision. Extensive experiments on both generic and task-speci c datasets demonstrate the e ectiveness of our approach. Under the same con gurations, Relation-DETR achieves a signi cant improvement (+2.0% AP compared to DINO), state-of-the-art performance (51.7% AP for 1× and 52.1% AP for 2× settings), and a remarkably faster convergence speed (over 40% AP with only 2 training epochs) than existing DETR detectors on COCO val2017. Moreover, the proposed relation encoder serves as a universal plug-in-and-play component, bringing clear improvements for theoretically any DETR-like methods. Furthermore, we introduce a class-agnostic detection dataset, SA-Det-100k. The experimental results on the dataset illustrate that the proposed explicit position relation achieves a clear improvement of 1.3% AP, highlighting its potential towards universal object detection. The code and dataset are available at https://github.com/xiuqhou/Relation-DETR.

Problem:: Self-Attetnion 과정에 명시적 구조적 편향이 없어 수렴이 느림/One-to-One Matching의 장점인 중복 제거와 단점인 Positive Supervision의 부족이 서로 상충됨

Solution:: Decoder의 Self-Attention에 Relation을 명시적으로 주입/Matching 되지 않은 Query에 대해 One-to-Many Matching 사용

Novelty:: Relation이 실제로 Detection에 도움이 되는지를 제안한 MC 지표를 통해 통계적으로 보임

Note:: 문제와 해결책이 깔끔하게 쓰였고, 제안한 MC 지표로 왜 해당 방법을 제안했는지에 대한 근거를 보충함

Summary

Motivation

- DETR 모델의 느린 수렴과 성능 문제

- Self-Attention이 입력에 구조적 편향(Structural Bias)을 가지지 않아 수렴 속도가 느림 → 현재 구조적 편향은 데이터 학습을 통해서만 얻어짐

- Non-Duplicate Prediction을 위해 Hungarian Matching 사용 → One-to-One Matching이므로 Positive Supervision이 부족 → 두 요소의 상충으로 인해 학습 효율이 저하됨

- 수렵 속도를 해결하기 위한 다양한 연구들이 존재하지만, 가장 많이 사용되는 Self-Attention을 개선하는 연구는 거의 없음

- 기존 Object Detection 발전 과정에서 BBox간의 Relation과 같은 구조적 편향을 Explicit하게 주입하면 성능 향상이 있었음

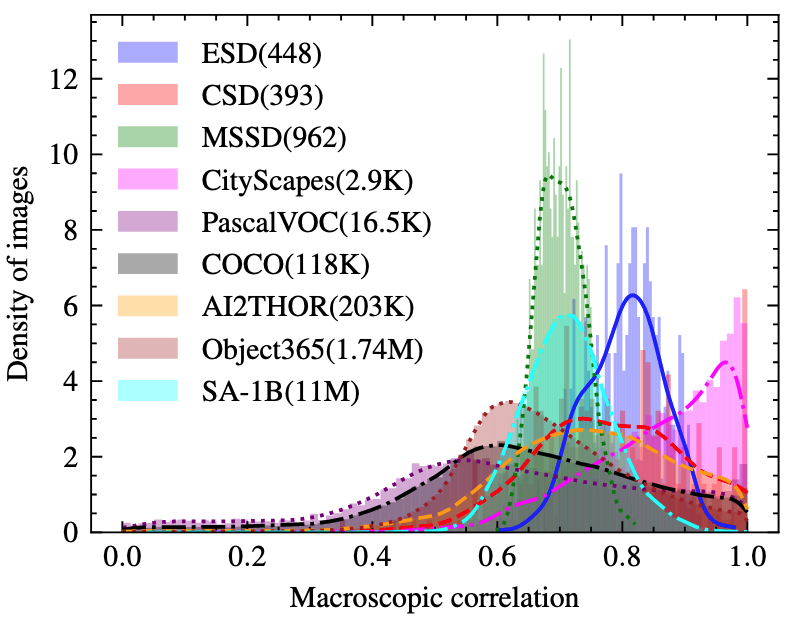

Statistical Significance of Object Position Relation

- 객체 간 위치 관계의 통계적 유의성을 Pearson Correlation을 기반으로 하는 Macroscopic Correlation(MC) 메트릭으로 측정

-

- 여기서

는 Bounding Box $와 간 Pearson 상관계수를 나타내고, 은 이미지 내 객체 개수를 나타냄

- 여기서

- 이미지 내의 객체 위치가 서로 유의미하게 상관되어 있음을 다양한 데이터셋(예: COCO, PascalVOC 등)의 분석을 통해 입증

- 분석 결과, 객체 위치 관계는 대부분 데이터셋에서 높은 상관성을 보임, 특히 구체적인 태스크와 관련된 데이터일 수록 더 높은 상관성을 가짐 → 이를 통해 명시적인 위치 관계 정보를 DETR에 도입하는 것이 타당함을 확인

Method

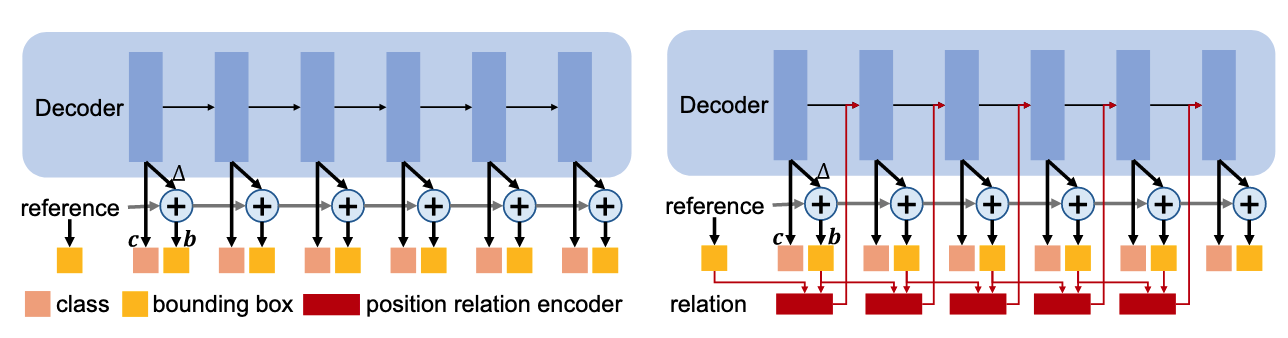

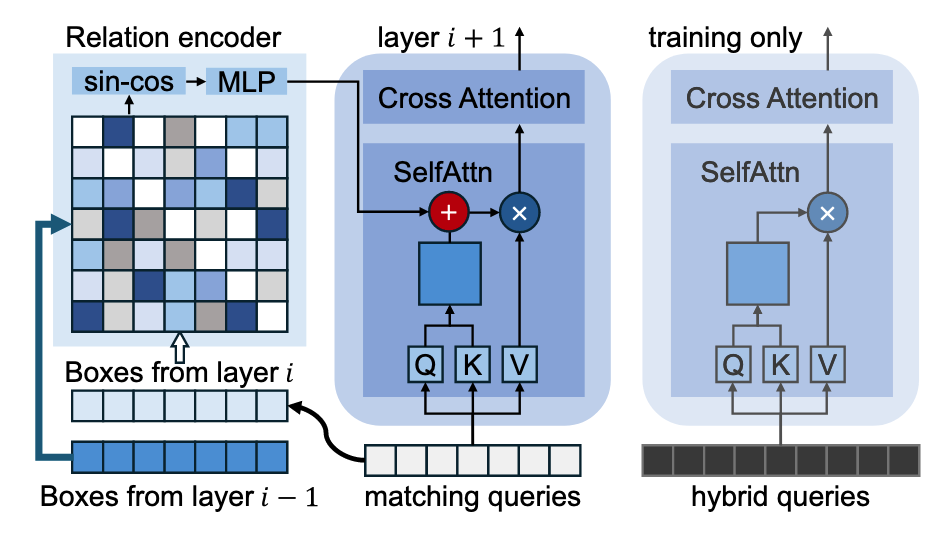

Position Relation Encoder

좌: 기존 DETR, 우: 제안 방법 , Decoder의 Object Query들의 Self-Attention에 Relation을 반영

- 명시적 위치 관계(Explicit Position Relation) 도입

- 위치 관계 Encoder를 사용해 두 Bounding Box 간 상대적 위치를 High-dimensional 임베딩으로 변환: 두 BBox의 중심 거리의 변화율과 너비, 높이의 변화율에 Log (

) → Sine-Cosine Encoding ( ) → Linear Transformation ( ) - Progressive Attention Refinement 방식으로 Attention Bias로 활용하여 Self-Attention에 구조적 편향을 명확히 부여:

- 위치 관계 Encoder를 사용해 두 Bounding Box 간 상대적 위치를 High-dimensional 임베딩으로 변환: 두 BBox의 중심 거리의 변화율과 너비, 높이의 변화율에 Log (

Contrast Relation Pipeline

- Matching Query (위치 관계 기반 Self-Attention, 중복 예측 최소화)와 Hybrid Query (기존 방식 Self-Attention, 다양한 긍정 예측 허용)를 병렬로 활용해 긍정 샘플 지도 강화 → H-DETR의 학습 과정을 따른 것으로 보임

- Matching Query는 해당되는 GT가 존재하므로 One-to-One Matching을 이용하고, Hybrid Query는 해당되는 GT가 없으므로 One-to-Many Matching으로 Candidate를 탐색 → DAC-DETR의 방법론에서 영감을 얻은 듯

- Hybrid Query는 학습 단계에서만 활용되어 충분한 긍정 샘플을 제공

Method 검증

- COCO 2017 및 다양한 Task-specific 데이터셋(CSD, MSSD)을 이용한 수치적 실험 비교

- 기존 DETR 모델 대비 AP, AP@50, AP@75에서 일관된 성능 향상 및 빠른 수렴속도 검증

- Relation Encoder의 범용성 검증 (다른 DETR 기반 방법에도 Plug-in-and-play로 일관된 성능 향상 가능)

- Class-agnostic detection 데이터셋인 SA-Det-100k에서 일반적 적용 가능성 검증